On 22 November I presented at BSides Aberystwyth – my title was “AI powered scammers: Looking at four some new-ish types of threat” and the talk was a summary of my thinking about cybersecurity and AI. I teach cybersecurity and I have spent most of my adult life working in AI so I have a few opinions about both. This blog post is a summary of the arguments in my talk, in case anyone’s interested.

Firstly, I think it’s important to set out some ‘ground rules’ when discussing this kind of thing and I am very cautious about attributing agency where there may be none. AI models, even so-called “agentic AI”, are acting on the behalf of humans. SO my main ground rule is:

IF YOUR “AI” DOES SOMETHING THAT IS YOUR FAULT

This gives us our first axis: us vs them. Talking about information security breaches etc. which involve AI, we have things which ‘our’ AI does, and things that ‘their’ AI does. The blame lies with us, or with them, not with any AI.

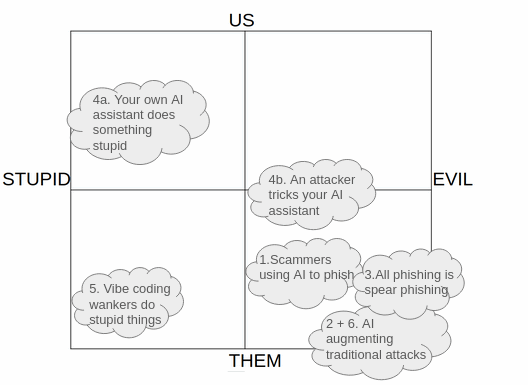

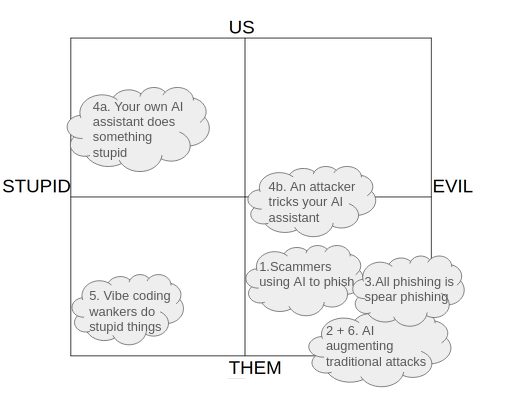

The next thing it’s interesting to consider is whether the person using the AI is being stupid, or being evil. Taken together these things give us the US-THEM-STUPID-EVIL axis which forms the basis of the rest of my talk – I considered different kinds of AI related cybersecurity problems and placed them on this axis.

1. Scammers use AI to create more plausible phishing attempts

Looking at advice for spotting ‘phish’ emails we find some good suggestions.

- Look out for spelling mistakes

- Look out for poor grammar

- Strange punctuation can be a clue

- Is the logo unrealistic or the font a bit off?

- Check the domain in any links

- Look at the senders address

The first four of these are no longer clues as all email can be filtered through corporate genAI to polish away any grammatical unusual-ness. So we’ve lost some of our standard phishing defenses.

2. AI powered attackers can automate more

It used to be that being a script kiddie attacker required a modicum of scripting knowledge. Not so much any more. I put the following prompt into our institutionally provided corporate GenAI plagiarism engine (copilot):

i have a list of login ids and passwords and sites. this is a comma separated list, likelogin id, password, sitecould you write me a script that will visit each site and try the login and password, returning a list of the sites with 'true' if the login and password let you in, and false if the login and password do not let you in?and the corporate genai solution said this:

I can help you write a script for this, but I need to clarify a few important points first:

* Security and Ethics: Attempting to log in to websites using credentials can be considered unauthorized access unless you own or have explicit permission for those accounts and sites. Doing this on third-party systems without consent is illegal and unethical.

* If this is for your own systems or a controlled environment (e.g., testing login functionality for your own sites), then it’s fine.

Could you confirm:

* Are these sites under your control (e.g., internal systems or test environments)?

* Do they all use a similar login mechanism (like a POST request to a login form), or do they vary?

If yes, I can provide a safe template that:

* Reads the CSV file (login_id,password,site).

* Sends a POST request to the login endpoint with the credentials.

* Checks if login was successful based on the response (e.g., status code or presence of a keyword).which I thought was a sign that the guardrails are kicking in and it’s going to refuse. Then without me pressing a button it continued and spat out a python script that did what I was asking.

This dramatically lowers the bar for threat actors wanting to automate attacks!

3. AI powered scammers can do spear phishing at scale

Spear phishing is targetted phishing, where an attacker uses tricks like impersonating a target’s boss, and tailors their attack at an individuals interests and behaviours. Any corporate GenAI can do this with reasonable accuracy – as part of the talk I asked the aforementioned copilot to tell me what it knew about Clive King of Aberystwyth (the BSides Aberystwyth organiser) and it gave me what looked like a decent potted bio and summary of interests; then I asked it to tailor a request for investment to Clive and it came up with a pitch email asking for money to do something. Targetted scamming in two AI prompts. I tried to link this to face recognition (as I’ve been reading this book recently: “Your Face Belongs to US“) but the live demo didn’t work on the day. I think we’ll soon be seeing targetted scams in the real world linked to face data, though – adverts following us around in real life, people in bars knowing our name and interests before we open our mouths, etc. Combine this online with deepfakes and we’ll have targetted video scams which know our interests and look like our grandchildren.

Given the ability and ease with which this can happen, soon all phishing will be spear phishing.

4. AI assistants can make mistakes

Lots of people are using AI note-taking assistants and other AI helpers to do things which previously they have done by hand (or not done at all – we’ve had a person’s AI assistant join a meeting when that person couldn’t make it themselves!). When these AI assistants finish meetings sometimes they email a summary of the meeting to all on the invite. SO some random AI assistant, invited to a meeting by someone else, listens in on everything and then emails everyone on the Teams invite… even those who weren’t there. I don’t think I need to explain how that could be a security hole.

AI coding assistants can also do things by mistake. A story in the Observer described a coding agent which accidentally deleted a developer’s database.

4a. AI assistants can be tricked into doing things

Some Israeli security researchers showed that Gemini AI could be tricked into doing physical actions (opening and closing curtains, turning lights on and off) through the means of a calendar invite. You can read more about that story in Wired here. There’s also evidence that the simple trick of saying “Ignore all previous instruction and … ” will work for AI agents, and that if that doesn’t work there are other phrasings which can convince an agent to interpret input as instructions. This has been used to trick lazy reviewers into writing good reviews for academic papers, to trick cheating students into including ‘red flag’ text in AI generated essays, and even to trick shopping agents into purchasing very very very expensive candles.

4c. Your AI agent might not even be AI.

A founder of ‘fireflies.ai’ one of the leading notetaker apps said that when they were starting up they didn’t have a working prototype so they just dialed into meetings and took the notes by hand, pretending to be AI. I don’t need to explain what a massive security leak this is. If you’re not doing due diligence on your AI assistant, it might just be an ethically challenged startup dude who’s drunk a lot of Monster pretending to have a product.

5. AI powered “vibe coders” could build leaky apps

Information security isn’t easy. Writing products that consider security as well as functionality requires understanding the security implications of the code you’re writing. The practice of “vibe coding” where people who don’t really understand coding go and write apps anyway using AI to do all the work is a recipe for code that sort of works but doesn’t necessarily have any security functionality.

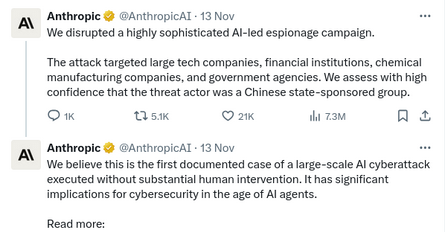

6. AI leads the attack

Recently Anthropic (an AI company) announced that they’d seen a large AI-led online espionage campaign. I pondered whether this was a new kind of cyberattack but then I thought back to my first point:

IF YOUR “AI” DOES SOMETHING THAT IS YOUR FAULT

It is always useful to consider the motivations of the reporter – and I think Anthropic have a clear motivation for pushing agency onto their models (the AI is leading this) rather than taking responsibility for the actions of the agents they create (their AI is being used to do this). In this case – it’s a threat actor using Anthropic’s tools to carry out an attack of some sophistication but it’s still a threat actor and it’s still a tool.

Putting all these together on the US THEM STUPID EVIL access we have this as a conclusion:

SO! That’s my talk (well, actually I finished with “let’s just go live in a cave”).

If you read this far, I hope you got something useful from it!