For EMF2018 (my general blog post about the festival can be found here) Charles Yarnold and my old friend Ben Blundell built a cyberpunk zone, called Null Sector, with installations and all sorts of cool stuff.

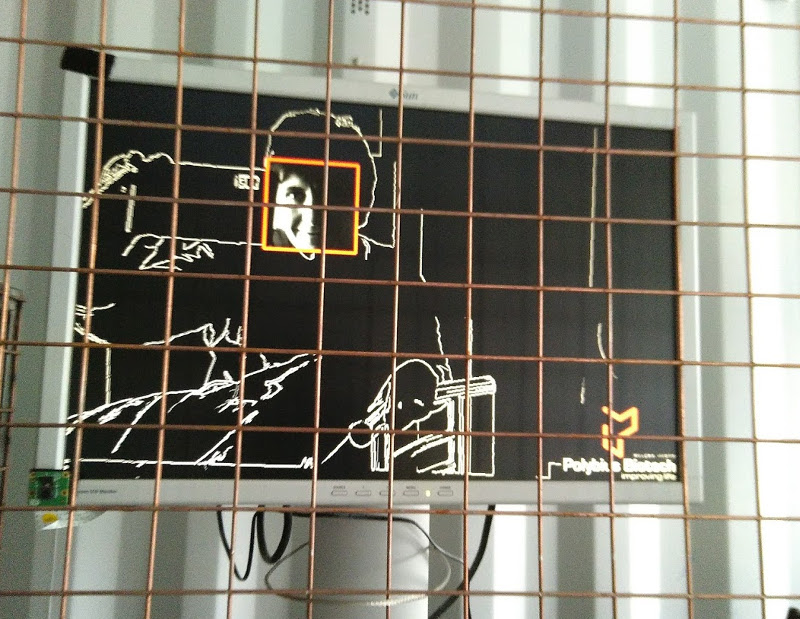

I made a tiny part of this, in the form of a surveillance themed installation which sat behind the cyberpunk-style grill in the bar area.

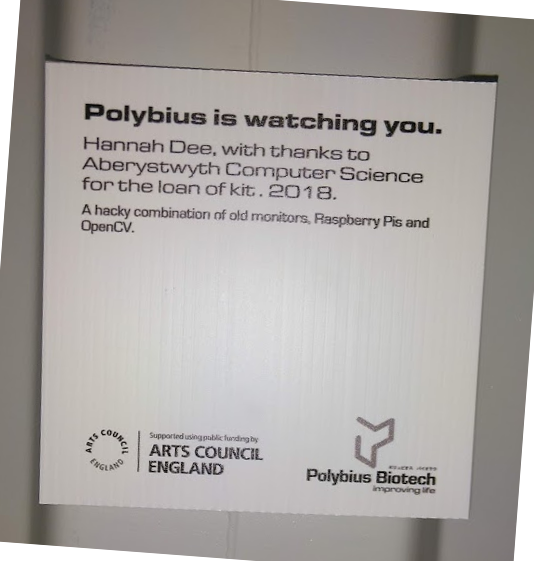

The aim of the installation was to provide a slightly disconcerting surveillance-style view of the people in the bar, matching the general branding of Null Sector, so it seemed as if the company running Null Sector (Polybius Biotech) were carrying out videosurveillance of attendees. There was no real videosurveillance involved, just a bit of raspberry pi/OpenCV smoke and mirrors.

Polybius biotech logo by Ben, who has real talent at this graphic design stuff

The Polybius Biotech brand has quite clear graphic style and a defined palette of colours – orange, magenta, blue and black (mostly). So my aim was to do a bit of face detection and a bit of live video enhancement, cast to this colour scheme, and display a constantly updating video feed.

The way I did this was to have a handful of different background styles (solid black, edge enhanced, edges only, greyscale image, sepia image, colour flipped image, colour image) and for each frame grabbed by the camera, the system did some face detection, and applied one of the background styles to the main image and one to the face regions. The system then drew boxes around the faces in Polybius Orange or Polybius Blue.

Which colour schemes it used was a random choice and stuck for a random number of frames. There were 14 different foreground & face combinations, and each one would be kept for a random number of frames between 1 and 100 – this made watching the screens more interesting as you could never know how long a particular view would go on for, and the views looked pretty different.

It went quite well but was not without hiccups. There were two things I could have done to make it better:

- Test the system in lower light conditions – when it got crowded in the shipping container, the light getting to the cameras wasn’t that great and so sometimes it just didn’t find many faces

- Optimise the code. I was running the system as fast as it would go, which gave about 3 frames per second. Raspberry Pis are great fun but not massively fast when it comes to live video manipulation. This was fine as that gave it a cool kinda laggy effect. But the unintended consequence of running the Pis as fast as I could was that they overheated pretty quickly. I think I could have gotten around this with a bit more forethought and a bit more thought about the code

At this point in a blog post I normally say “And here’s the code!”, but to be honest I’m a bit embarrassed about the code quality here – it’s 600 lines of unoptimised c++ all in one file and full of stupid errors. I’ll tidy it up, maybe, and use it for something else. However I will give a bit of a deep dive into a few of the gory technical details.

GORY TECHNICAL DETAILS

- The face detector was a bog-standard Viola-Jones style cascade classifier, from OpenCV’s standard libraries

- The edge detector was even more old fashioned, as it used Canny which was published in 1986. Works though.

- The input came from a Raspberry Pi camera module, which is a cheap way to get pictures into a Pi, and it has a very small form factor (if you look at the image 2 pictures up you can see it – it’s a little green square on the bottom left of the monitor).

- My monitors were borrowed from Aberystwyth Computer Science and came from a decommissioned computer lab – they were old Sun Microsystems flat screen jobs. These had DVI & VGA inputs but the Pis only have HDMI outputs, so I had to get some adaptors. Turned out the adaptors I bought from ebay were crap and so I had to find some new cables with a day to go – fortunately, hacker camps are good places to find random cabling.

- OpenCV has some graphics output functionality but it’s a bit clunky. As my monitors were all identical I was able to sidestep the OpenCV graphics, and write directly to the framebuffer. (That is: low-level graphics can be hacked about by accessing the bit of memory which corresponds to the monitor, then writing directly to that memory – this is called the framebuffer). There’s a great tutorial about doing this stuff on a Pi at Raspberry Compote, but in short, you need to…

- Find out the size of your monitor

- Find out whether the monitor is RGBA or BGRA or RGB or BGR

- Set up a pointer to the bit of memory which makes up the monitor input

- Convert your image to the right format (e.g. RGBA in my case)

- Splat the image onto the right bit of memory and Hurrah! The display will change

- Finally I set the machines so that they had a fairly cyberpunky desktop background (“The sky above the port was the color of television, tuned to a dead channel”) and to load the video program upon boot. This meant I just had to plug it all together then plug it in and it would all just work.

In all – it was fun, it nearly worked well, people seemed to like it, and I learned a lot about building things for long term installation in a public place. Next time will work better.